Logistic regression

Logistic regression is an algorithm for binary classification. Here's an example of a binary classification problem. You might have an input of an image and want to output a label to recognize this image as either being a cat, in which case you output 1, or not-cat in which case you output 0, and we're going to use y to denote the output label.

In a binary classification problem , the result is a discrete value output.

For example

- account hacked (1) or compromised (0)

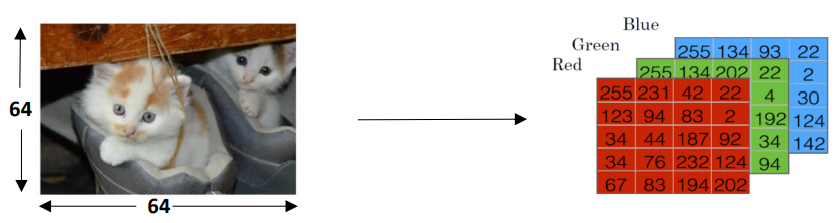

- a tumor malign (1) or benign (0)Let's look at how an image is represented in a computer. To store an image your computer stores three separate matrices corresponding to the red, green, and blue color channels of this image.

So if your input image is 64 pixels by 64 pixels, then you would have 3 - 64 by 64 matrices corresponding to the red, green and blue pixel intensity values for your images.

So to turn these pixel intensity values - Into a feature vector, we unroll all of these pixel values into an input feature vector x. To unroll all these pixel intensity values into Feature vector, we define a feature vector x corresponding to this image as follows.

We're just going to take all the red pixels , then eventually blue and green pixels. If this image is a 64 by 64 image, the total dimension of this vector x will be 64 * 64 * 3 = 12288 because that's the total numbers we have in all of these matrixes.

Thus nx=12288 to represent the dimension of the input features x. So in binary classification, our goal is to learn a classifier that can input an image represented by this feature vector x. And predict whether the corresponding label y is 1 or 0, that is, whether this is a cat image or a non-cat image.